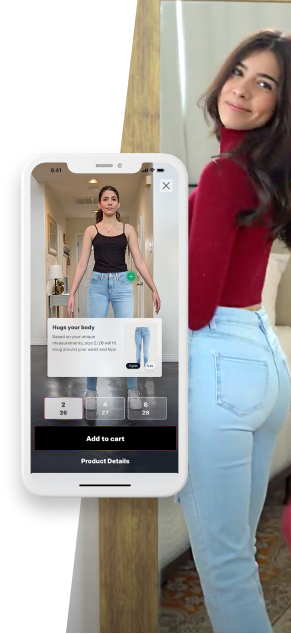

Key tech pillars of our solution

Learn moreComputer Vision & Deep Learning

We use computer vision and deep learning to analyze the photos from which we acquire and process the body measurements and specific body shape data. Our advanced computer vision algorithms detect the dressed human body on photos taken with any smartphone on any background. Neural networks determine landmarks and produce a set of probability maps.

Proprietary Statistical Modeling

We use proprietary statistical modeling to generate human models of arbitrary complexities. We have a full pipeline here that goes from registering raw scans. The dataset of the raw scans is consistently growing due to our scanning lab. We also use our statistical modeling to generate synthetic data.

Machine Learning & 3D Matching

We use machine learning & 3D matching to build a unique 3D model of each scanned customer based on detected landmarks allowing us to accurately obtain human body measurements.